An exploratory POC: could a digital human handle Indian telecom self-service?

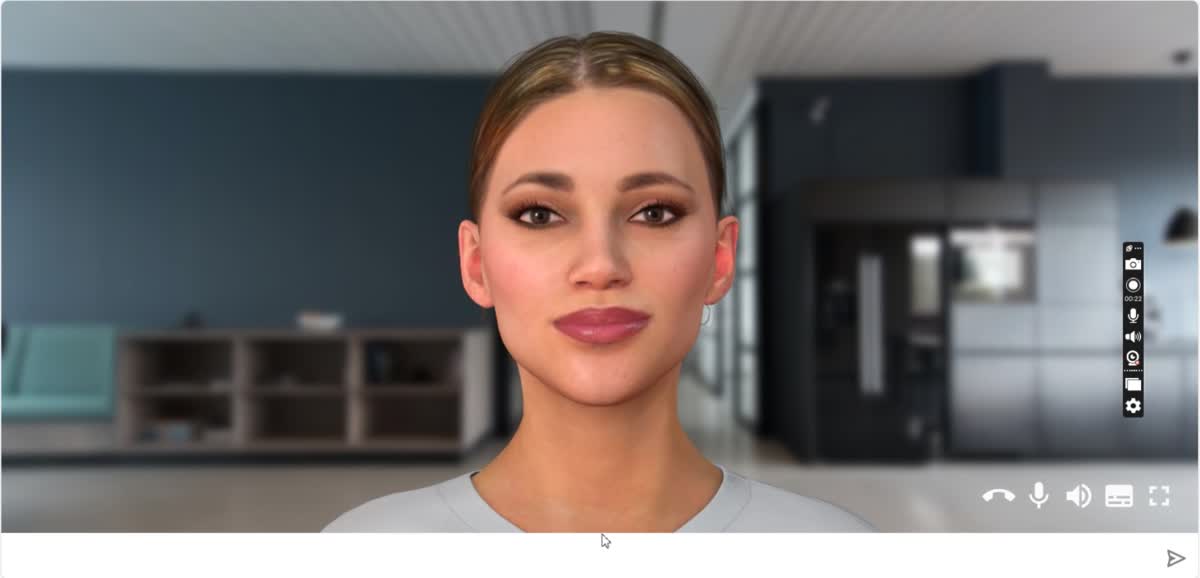

Alongside the production work, I led an exploratory POC asking a different question: what if the next generation of self-service for Indian telecom customers wasn't text or voice — but a photorealistic digital human who could hold a face-to-face video conversation in multiple Indian languages?

The hypothesis: for low-trust, first-time-online users, the cognitive overhead of a chat interface or voicebot may be higher than we assumed. A face — even a synthetic one — might lower the barrier to engaging with self-service for users who default to "talk to a human at the store" because that's what feels safe.

The POC was scoped, prototyped, and presented. It surfaced as many questions as answers — uncanny-valley discomfort for some user segments, latency tradeoffs, vendor-platform dependencies — and the work served as an input to broader strategy conversations about where conversational AI should and shouldn't go for this customer base.

Detailed walk-through available on request, under appropriate confidentiality.